Alexa Live and the Future of Ambient Computing

Alexa Live and the Future of Ambient Computing Amazon last week held its Alexa Live 2021 developer conference, which would have been a lot more fun if during the event the Amazon Echo show in my office hadn’t gone insane.

During the keynote, they started rattling off all the new commands that the Echo products could follow. My Echo could hear and tried to respond to those commands. When they got to “start the car,” fortunately I hadn’t loaded that skill, or I’d have likely found my car in the neighbour’s pool.

Amazon is doing some fantastic things with Alexa. Still, I do wish they’d tighten up user authentication so that anyone yelling anything that sounds like Alexa doesn’t trigger a response that results in damage — like turning on one of my intelligent faucets, for instance, and flooding the house.

Read Also Google Quietly Activates New Web Performance Metrics

Alexa Live and the Future of Ambient Computing

At some point, they’ll have to tell us to unplug our Echo devices when they have a presentation like this, or else we’ll have a crapload of houses with lights going on and off; water being turned on and left running; and cars starting and pumping CO2 into the garages and homes that house them, which would all end badly.

I do think one of the things they need to prioritize is more alternative name choices for Alexa.

Let’s talk about the promise and some of the problems with the coming wave of ambient computing devices, and we’ll close with my product of the week, Amazon’s 2nd Generation Echo Frames.

Read Also Top 10 Scholarships in the USA For International Students

Ambient Computing: Apple vs. Amazon

Ambient computing is where your computing resource is always around you and always available for engagement with the primary interface. At least currently, that being the voice.

Both Apple (Siri) and Amazon are looking at ambient computing as a future expansion opportunity, but Amazon is significantly outperforming Apple because they get that the race isn’t to revenue. It’s to coverage. As a result, Apple charges a ton for their devices to maintain their massive device profits. At the same time, Amazon sells its related hardware closer to the cost and makes money on licensing and retail sales through the devices.

The result is that Amazon will pretty much partner with anyone and support almost anything with its platform, including iPhones. They are driving the Voice Interoperability Initiative, which has 90 members, including Facebook, Intel, Qualcomm, Sonos and Garmin. While, in contrast, you don’t find Siri anywhere near as prevalent.

It isn’t just Apple that Amazon is out-executing; it’s Google and Microsoft as well. The winner in this race will be the one that gets on the most deployed hardware, not the vendor who was arguably first to the segment with Siri. There is a reasonable chance that Amazon’s Alexa app store could eventually eclipse both Apple’s and Google’s, particularly if governments force both vendors to support alternative stores more aggressively, which is likely.

It is interesting to note that both Amazon and Microsoft seem to be anticipating this opportunity.

Our Future With Alexa

Much of the discussion last week was on what is coming from Amazon Alexa over the following months and years, and the anticipated expansion is aggressive.

For instance, you’ll be able to do things like order on the device from a restaurant (McDonald’s is the first to implement) and have it automatically tracked and report the progress of your order until it arrives. Imagine just saying, “Alexa, order my usual from McDonald’s,” and then have the display (Echo Show) switch to a tracking map to show where your food currently is and its progress toward your table.

The Alexa technology is getting more human-seeming as well — with improved word emphasis and inflexion — so that over time it will increasingly sound human and not robotic. This enhancement will make interacting with the devices feel far more natural.

This depth of understanding should improve Alexa’s ability to determine what you want by comprehending words and voice inflexion, resulting in improved accuracy over time.

Event-based trigger skills are coming. An example of an event-based skill is where Alexa knows what you are doing and why you are doing it; then automatically provides recommendations based on that knowledge.

For example, it sees you are leaving the house, and you’ve left it unlocked, and asks if you want to lock the house. If you say yes, then your house is remotely locked. Or you go out for a run; Alexa knows the kind of music you like to listen to while running and suggests a new playlist that automatically starts when you accept that choice.

When you order food for pickup, it reminds you that the order is ready and then asks for the parking spot number you’re in after arriving and provides that to the store, so the wait staff knows where to find you for handoff.

Music and entertainment are getting some exciting additions. For instance, you’ll be able to participate in Q&A events with your Alexa device, take real-time surveys, verbally make comments, and interact with the artists more often. You’ll be able to make song selections for radio shows, dedicate selections to others (much like we used to do using phones) just by chatting with your Alexa device using a new service called Spotlight under Amazon Music.

Games are a big focus of Alexa’s evolution, and the products will become voice-driven gaming platforms over time. The nice thing about voice-driven games is you can arguably play them safely while doing other things like driving, helping you to remain alert during long drives or at night. Or, use the games to pass time when your hands are otherwise engaged.

Tracker integration is improving. Alexa already works with Tile but is expanding to other devices — like those from Samsung — so you can say “Alexa, find my phone,” and the phone will ring while Alexa tells you the device’s general location so you can get close enough the hear that ring.

Alexa Live and the Future of Ambient Computing

Finally, it is moving into healthcare for monitoring and assisting people in need of medical help.

Alexa is already moving into TVs, cars, smartphones, and an increasing number of additional third-party devices.

Wrapping Up

Amazon accelerated its ambient computing initiative similarly to how Microsoft once drove Windows and Google drove search. Alexa is already the default standard for ambient computing, and it is spreading like wildfire. It will become more of a companion than an assistant as time goes by, increasingly knowing far more about you and responding to your wishes before you even know what those wishes are.

We’re looking at a near-term future of talking to our computers and their increasing capability to talk to us. Amazon Alexa is the spear for this initiative, and its progress is nothing short of phenomenal.

Alexa Live and the Future of Ambient Computing

Amazon Echo Frames

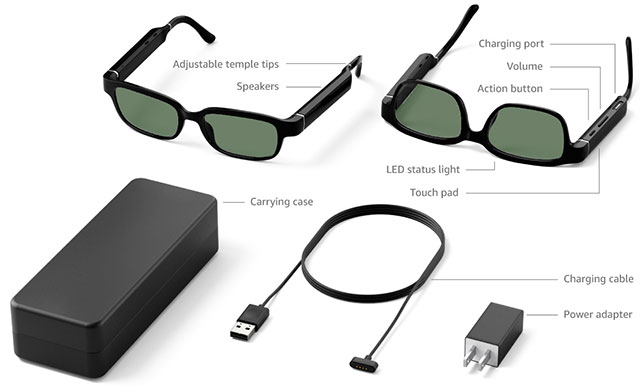

In advance of last week’s event, Amazon sent me a pair of Echo Frames with dark lenses, which create a counterpoint to the idea that these devices need to be all around us.

With these glasses, Alexa is wherever I am. The glasses connect to the Alexa app on your smartphone, putting the related Alexa experience wherever you are.

The speakers are decent, and when playing music, though I wonder if the Echo Buds might be a better choice so you can use the glasses you already have, and the incoming sound is more private (these glasses use external speakers).

The glasses are waterproof, come with polarized lenses, and cost $209. I would expect future generations of these glasses to have AR capabilities with integrated displays, auto-dimming lenses, and a more excellent choice of glasses frame designs (for now, there is only one frame model).

But if Alexa is on your body, do you need any additional Echo devices, mainly if the glasses could show data to the user? Wouldn’t you use the glasses to make sure Alexa is where you are rather than having to buy multiple Echo devices?

Because the Echo Frames, along with the Echo Buds (costing $140), potentially change the evolution of Echo devices and are darned helpful, they are my product of the week Alexa Live and the Future of Ambient Computing